When Code Gets Cheap, Architecture Gets Scarce

AI makes it easy to produce more code. It makes it harder to protect the shape of a system. That is the shift most engineering organizations have not fully priced in.

This is the fourth post in a series on what AI is actually changing in software engineering and engineering management. Earlier posts: coding got faster, delivery did not · the new management problem is not adoption · repository memory and the risk of teaching AI your mistakes.

This post expands on a thread I posted on LinkedIn.

The assumption that does not hold

When code generation gets cheaper, the natural assumption is that engineering gets easier. The main bottleneck — the time it takes to write working code — starts to shrink. Output goes up. The team moves faster. Problem solved.

I do not think that is what actually happens.

When code gets cheaper to produce, the constraint shifts. It moves away from implementation and toward the decisions that determine whether implementation was worth doing at all. Problem framing. Boundary-setting. Simplification. The judgment to look at a proposed change and decide the right answer is less code, not more.

Architecture does not get devalued when AI can write more of the code. It gets exposed. The decisions that were previously obscured by implementation work are now the visible bottleneck. And those decisions are harder to make, not easier, when plausible implementations start arriving faster than the problem has been properly understood.

What "more code" looks like in practice

Generated output has a way of making complexity look productive. The code arrives quickly. Options multiply. Plausible implementations appear early, before anyone has spent enough time asking whether the problem was framed correctly in the first place.

This is where architectural clarity starts to erode — not in one dramatic failure, but in a steady accumulation of almost-reasonable changes. Each one respected local patterns. Each one passed review. Each one added slightly more surface area than the problem required, made the system a little harder to understand, left behind one more abstraction that will need to be worked around next quarter.

None of it looks wrong at the time. That is the problem.

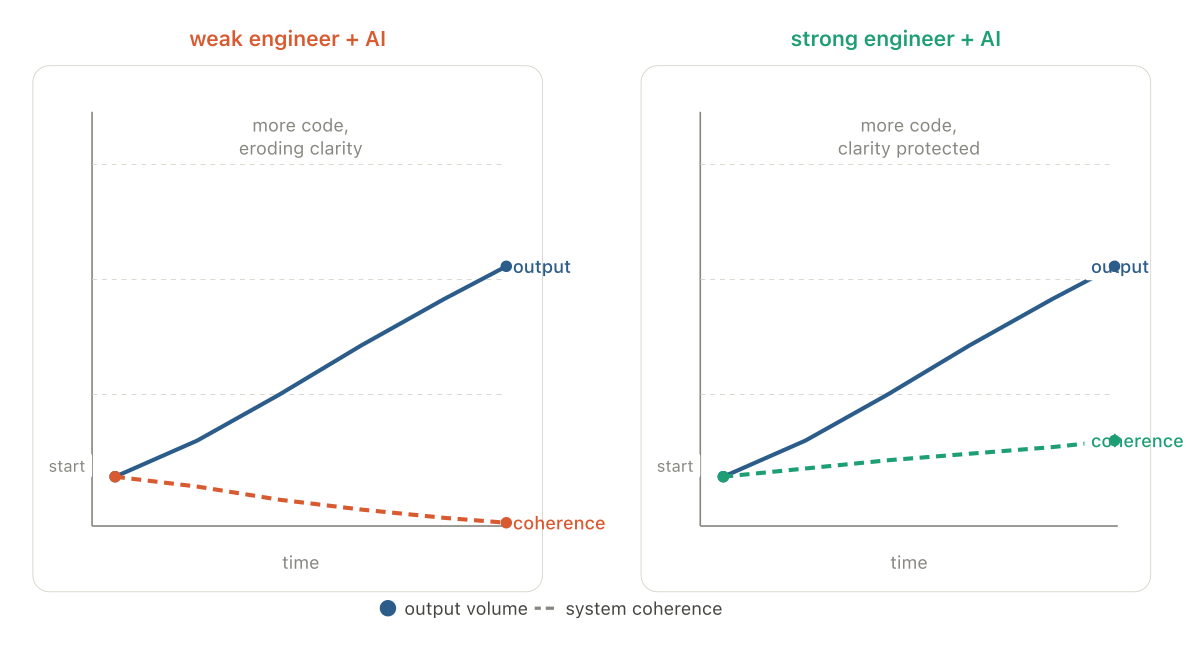

A weak engineer with AI can produce more code. A strong engineer with AI can often remove more code. Both can look equally productive for a while — the weak engineer's PRs keep getting merged, the velocity metrics look fine, and the system quietly becomes harder to reason about.

Why this is a management problem

The most important question is not whether AI can write the code. It is who is protecting the shape of the system while the volume of generated code goes up.

That question has a management answer, not just a technical one. If the team is primarily rewarded for output — features shipped, tickets closed, pull requests merged — then the incentive runs against the work that keeps a system coherent. Simplification is invisible. Saying no to a change that adds more surface area than value does not show up in anyone's velocity metrics. Removing code that should not have been written in the first place looks like negative progress on a burndown chart.

AI makes this tension sharper. When output is cheap to generate, the temptation to measure output is stronger. And when output is the primary signal, the engineers who are doing the harder work — protecting clarity, pushing back on unnecessary complexity, insisting on better problem framing before implementation starts — can look less productive than the ones who are not.

The management job is not to maximize generated output. It is to make sure the team is still rewarded for simplification, good boundaries, and saying no to changes that add more surface area than value.

What protecting clarity actually looks like

This is not an argument for slowing down or adding process. It is an argument for being explicit about what the organization values, because AI makes it easier than ever to ship volume without shipping clarity.

A few things that tend to matter in practice:

Problem framing before implementation. When code can be generated quickly, the temptation is to start generating early and adjust from there. The teams that do this well resist that pull. They spend time on what is being built and why before anything gets written. The cost of implementation has dropped; the cost of building the wrong thing has not.

Rewarding removal. Deleting code, consolidating duplicate implementations, collapsing abstractions that no longer carry their weight — this is skilled work. It is harder to do well than generating new code, and it often makes more difference to the long-term health of the system. If the team's culture treats this as maintenance rather than engineering, the system will drift.

Reviewing for design, not just correctness. As AI increases PR volume, the review process tends to compress. Reviewers check whether the code works, not whether the change was the right one. The review question that matters most — is this the right design, in the right place, solving the right problem — gets asked less often under volume pressure. That question needs to stay in the process deliberately, not survive by accident.

AI can help teams build faster. It can also make it much easier to bury a system under well-formed code that should not have existed in the first place. The strongest engineers in this environment will not be the ones who generate the most. They will be the ones who kept the system coherent while the volume around them kept rising.

The next post in the series looks at what happens to code review itself when the volume goes up and the contributor is increasingly a system rather than a person — and what reviewers need to do differently as a result.